General context: particle physics

The question is conceptually quite simple: what are we made of? Particle physics attemps to address this question by studying the elementary particle (ie. the one which are not made of something else) and how they interact together to form different composit system up to our human-scale matter that one can touch and see. At the very small scale, the particle are actually described by fields which obey to quantum dynamics (each particle appears to be a quantum of vibration of the corresponding field). In this mathematical description, symmetries are the corner stone of the structure of the theory. Indeed for both free particle and interacting particle, specific invariances strongly constrain the structure of field dynamics, thus particles behaviour.

The Standard Model

The Standard Model is the current mathematical framework which allows a quite extensive and precise prediction of plenty of observations at the sub-atomic scale. The basic ingredients are quantum fields and symmetries. In spite of very attractive features - such as understanding three of the fourth fundamental interactions as coming from a similar symmetry, and predictions - like the existence of neutral current discovered at CERN in 1984), few observational and conceptual questions remain open. To mentione just an example for both conceptual and observational aspects: the gravitation cannot be described in the Standard Model and we do know by experiment that neutrinos are massive (unlike in the Standard Model).

How to improve our understanding of Nature?

One of the most appealing feature of the Standard Model is to unify the electromagnetic force and the weak force into the so-called electro-weak interaction. This unification should be happening at high energy and that is why these two interactions look different at our scale. What makes the transition between the unified interaction and how they appear to us? The Higgs field, and its associated particle the Higgs boson, plays a crucial role in this question and its discovery and study is clearly a good area to look to push further our understanding.

Another option (and it is cleary a non-exhaustive list) is study particles which seems a bit peculiar with respect to the others. In the Standard Model, the top quark is a bit appart because he is the heaviest matter particle, and by far! No one know why the top quark seems to be different and people think it might be because it is connected to a new set of not-yet-known particles and interactions. It represents then also a good area to search for laws beyond the Standard Model.

Particle collider experiments

In order to probe Nature at this scale, one needs to reach the highest energies since smaller distance always go with higher energy. A way to concentrate a lot of energy is to produce collisions between very energetic particles. Few facilities in the world allow to reach big enough collision energies to study physics at the electroweak scale. The last one was the Tevatron near Chicago (with proton-antiproton collision at 1.92 TeV) and the only currently working machine is the Large Hadron Collider (LHC) near Geneva (with proton-proton collisions at 13 TeV).

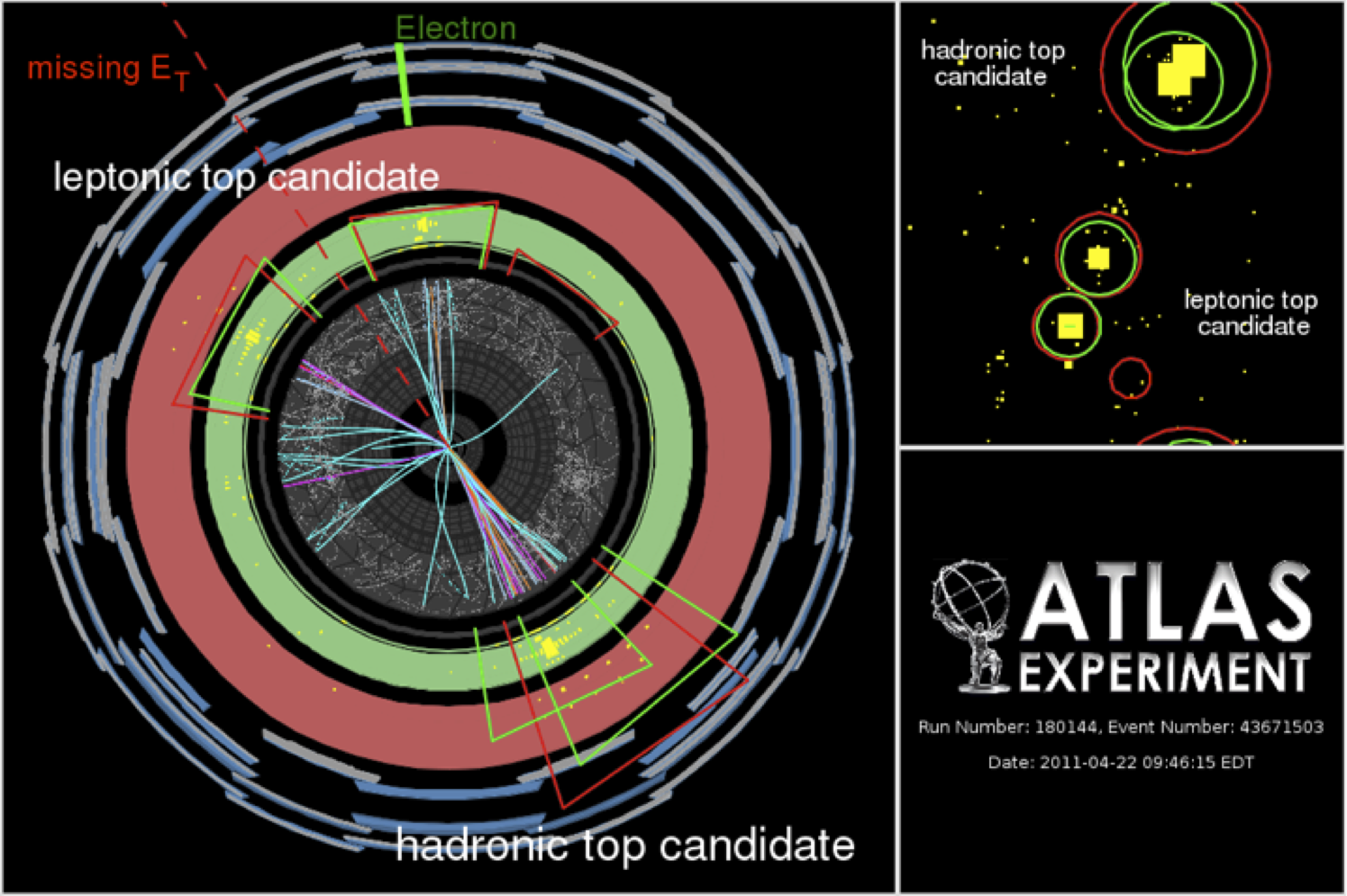

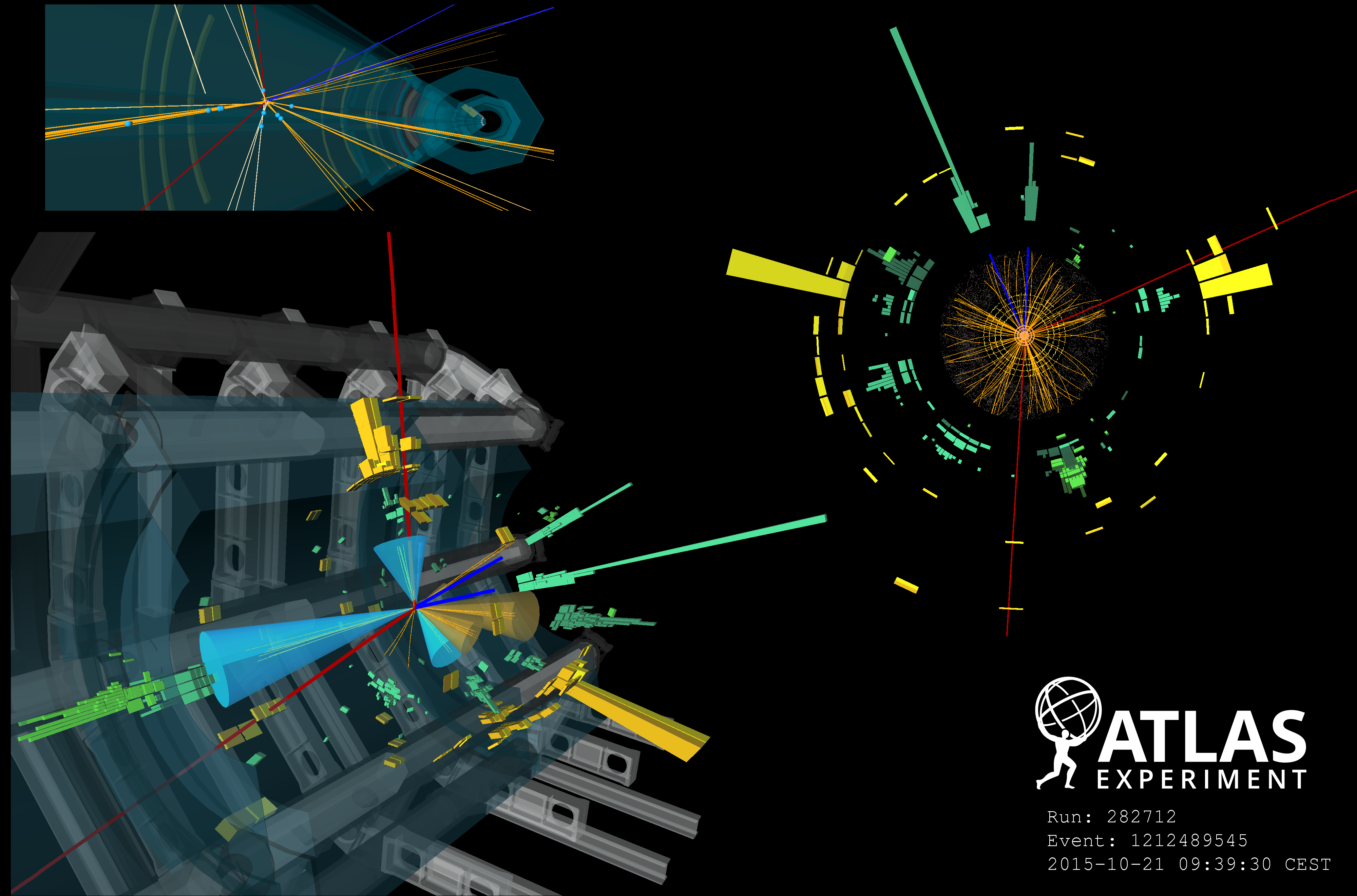

In order to establish what happened during the collision at the microscopic level, it is necessary to measure all the out-going particles which are produced. This is done with very complex detectors measuring energy deposits and tracks in all directions of space. The hadronic calorimer of the ATLAS experiment is one the sub-dectector on which I work on, involved in these measurements. All together, every sub-detectors allow physicists to reconstruct the full collision by knowing, let say, if there were an electron in this direction or a muon in that direction. Sophisticated alogorithms have to be developped in order to recognize a give particle from all the energy deposit the detector sees: those are called particle reconstruction and identification. In particular, I worked on identification of tau lepton (a kind of very heavy electron).

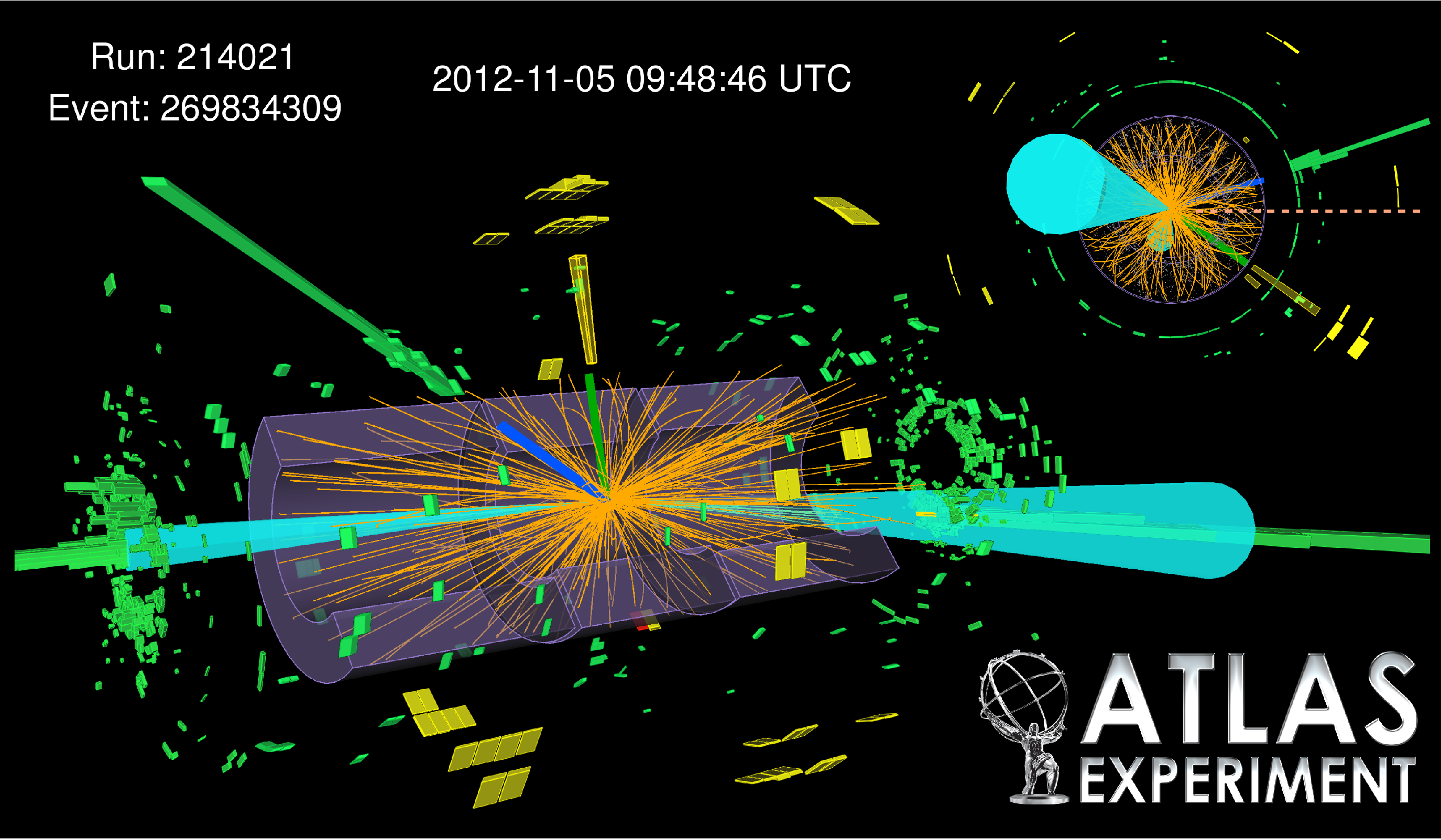

Once every particle is identified using the detector, physicist are actually able to select collision where a specific particle were produced, like the Higgs boson, that was the highest goal in particle physics during the last decades and that was discovered in 2012. I partipcated to his search at the Tevatron and to the evidence of one of his properties at the LHC. It is also very important to search for collisions which are not predicited by the Standard Model (or not with the same rate) to discover new physics laws. I am participating to search for new physics with the ATLAS detector in event with two lepton having the same electric charge.

Search for new physics in the top quark sector

The top quark is the heaviest known particle in the Standard Model and deserve then a special attention. A search for new physics in the top quarks sector can be performed in collisions with two leptons with the same electric charge and some b jets. This final state is sensitive to many beyond the Standard Model signatures and was already studied at the LHC Run 1. This analysis is being performed for the LHC Run 2 data and a preliminary public result is available with a fraction of Run 2 data. Below, few details are given concerning the physics motivations and the Run 1 results as well as a Run 2 update.

Top quark pair production

LHC collisions produce many top-antitop pairs allowing to precisely measure top quark properties. Once produced, the top quark almost immediatly decay into a W boson and a b quark leading to 3 typical final states for top-antitop, depending on the W boson decay, with 0, 1 or 2 leptons. This kind of collision is now well known and any deviation from what we expect could be a sign for new phenomena.

Four top quarks final state - Run 1

The question is how many top quark can we produce in a single collision? In fact, the top quark can be also singly produced (via electroweak interaction rather than strong interaction in case of pair production). Here, the appraoch to search for new physics is to look for four top quark production, since the rate predicted by the Standard Model is very low for this production, and its signature can be quite spectacular and then easy to extract from known processes. But how new particle or interaction could enhence four top quark production? In the Standard Model, there is no direct interaction between top quarks since they always interact via gluon exchange. Let's assume there is a new interaction between top quarks mediated by a very heavy particle (too heavy to be directly produced at the LHC). Then, this would appear at the LHC energy scale as a direct interaction between top quarks, leading to four top quarks production. The interesting feature in this approach is that the experimental signature doesn't depend on the structure of the new interaction.

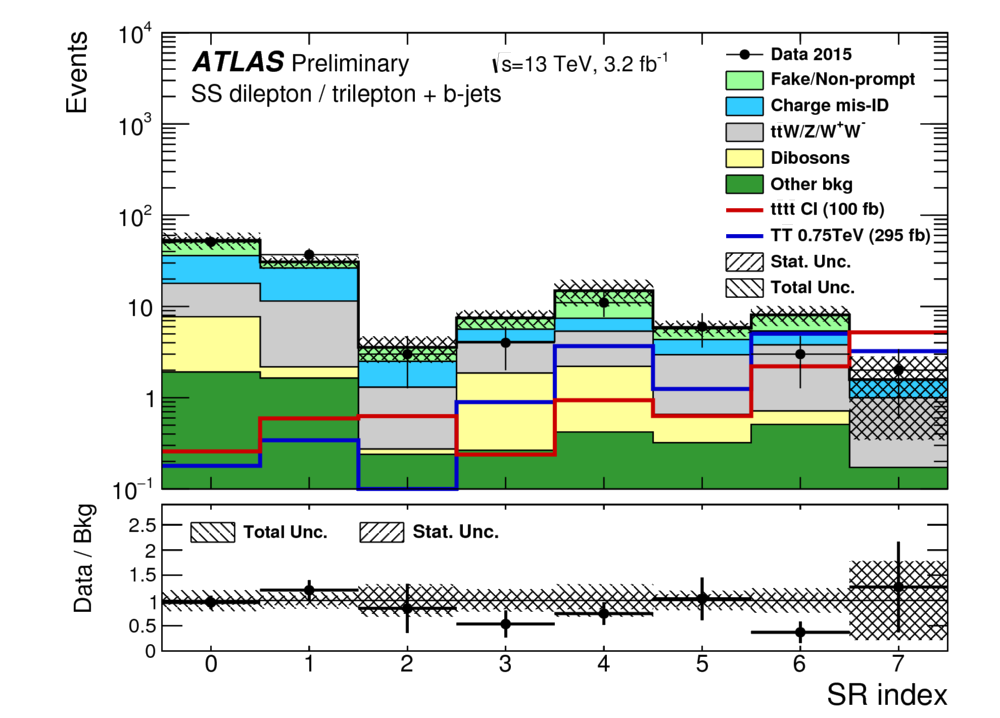

Selecting collisions with two leptons with the same electric charge allows to get four top quarks production. The number of Standard Model processes leading to such a signature is reasonably low so the dominant background for this analysis is mostly related to detector effects leading, for example, to the wrong charge assignment. In this case, processes creating two opposit sign leptons (like top-antitop pair production) could be wrongly identified as a same-sign dilepton collision. The data collected by ATLAS during the Run 1 were analyzed and an interesting excess of observed data were found with respect to the expected background. This result allowed to set limit on new physics models and was published in JHEP 10 (2015) 150.

Four top quarks final state - Run 2 update

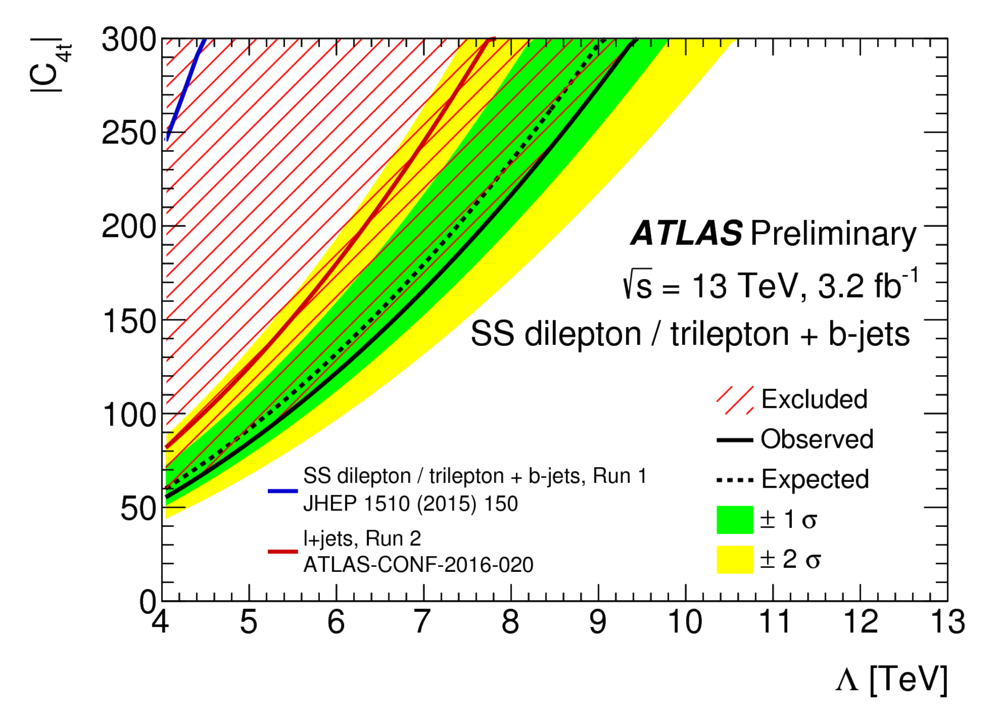

In June 2016, we released a preliminary result based on Run 2 data collected in 2015, having a center-of-mass energy 60% higher, detailed in ATLAS-CONF-2016-032. The constraints are much more stringent (as shown in figures below), especially for processes requiring high energy such as four top quarks production, but the modest excess is not present anymore (with 3.2 fb-1 of data). This result has been presented in several international conferences such as LHCP2016 or ICHEP2016. An update with more data is now in preparation.

Detector R&D for the High Luminosity LHC

The ATLAS hadronic calorimeter

The ATLAS hadronic calorimeter is a subdetector of ATLAS dedicated to energy measurement of hadrons, particles made of quarks (such as proton, neutron or pions), heavily produced in proton-proton collisions. This subdetector is made of an alternance of steel and scintillating tiles. Steel plates allow to interact with incoming hadrons during which many secondary particles are produced. Scintillating tiles produce light when one of these secondary particles go through. This light, reflecting the energy of the initial hadron, is collected via optical fiber and converted in electric signal by photomultipliers.

High Luminosity LHC

The high luminosity LHC is a project for 2023 aiming to upgrade the LHC to produce proton-proton collision with a much higher rate than today. This allows to capture possible rare phenonmenon and also to probe the Standard Model with a higher precision, especially the Higgs sector. Detector are also upgraded in order to cope with the much higher radiation exposure and the unprecedent collision rate. The HL-LHC project was approved by the CERN scientific Council in June 2016 and will then happen.

Electronic readout system

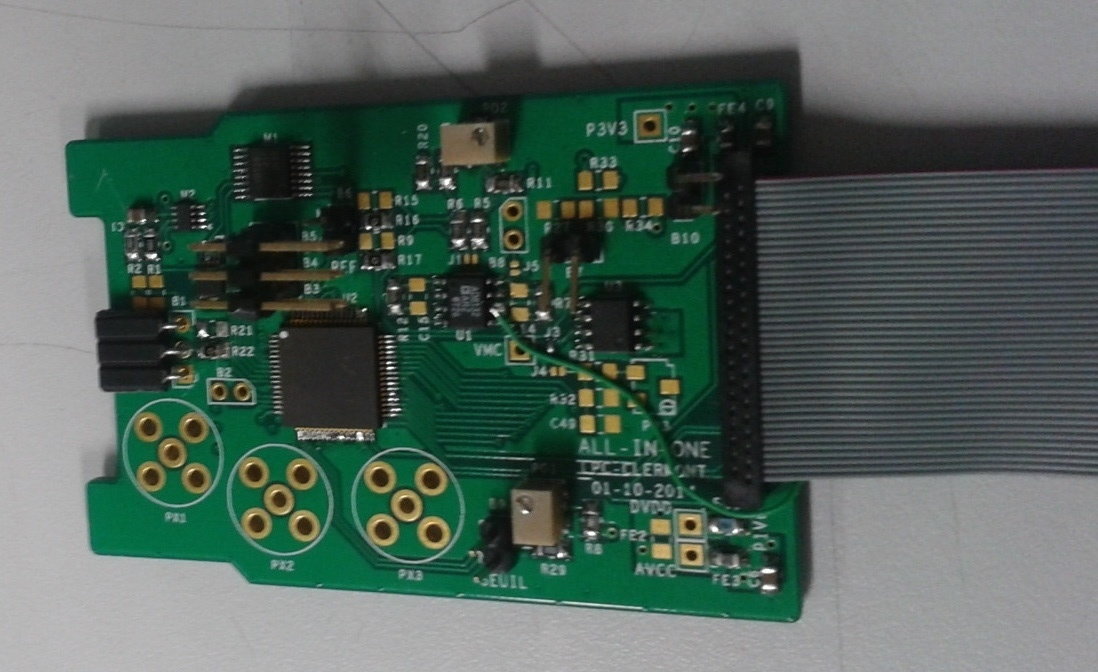

The readout electronics is the last step before the signal treatment process. It reads the charge delivered by the photomultiplier, shape the pulse and digitize it so that it can be analyzed to extract the energy corresponding the collected light and the time at which it was deposited. The readout electronics must satisfy several specifications in term of precision, noise, response linearity but also in term of radiation hardness. I am involved in the design and the test of an Application-Specific Integrated Circuit (ASIC), called FATALIC, able to read photomultiplier signal fulfilling all specifications required by the physics.

FATALIC is based on 130nm technology build in three different blocks: a current conveyer able to directly read a current (and not a voltage), a shaper and an Analog-Digital Converter (ADC). One feature of this readout system to have three different gains in order to have a very good precision over a large dynamic range.

Testing prototype with particle beams ...

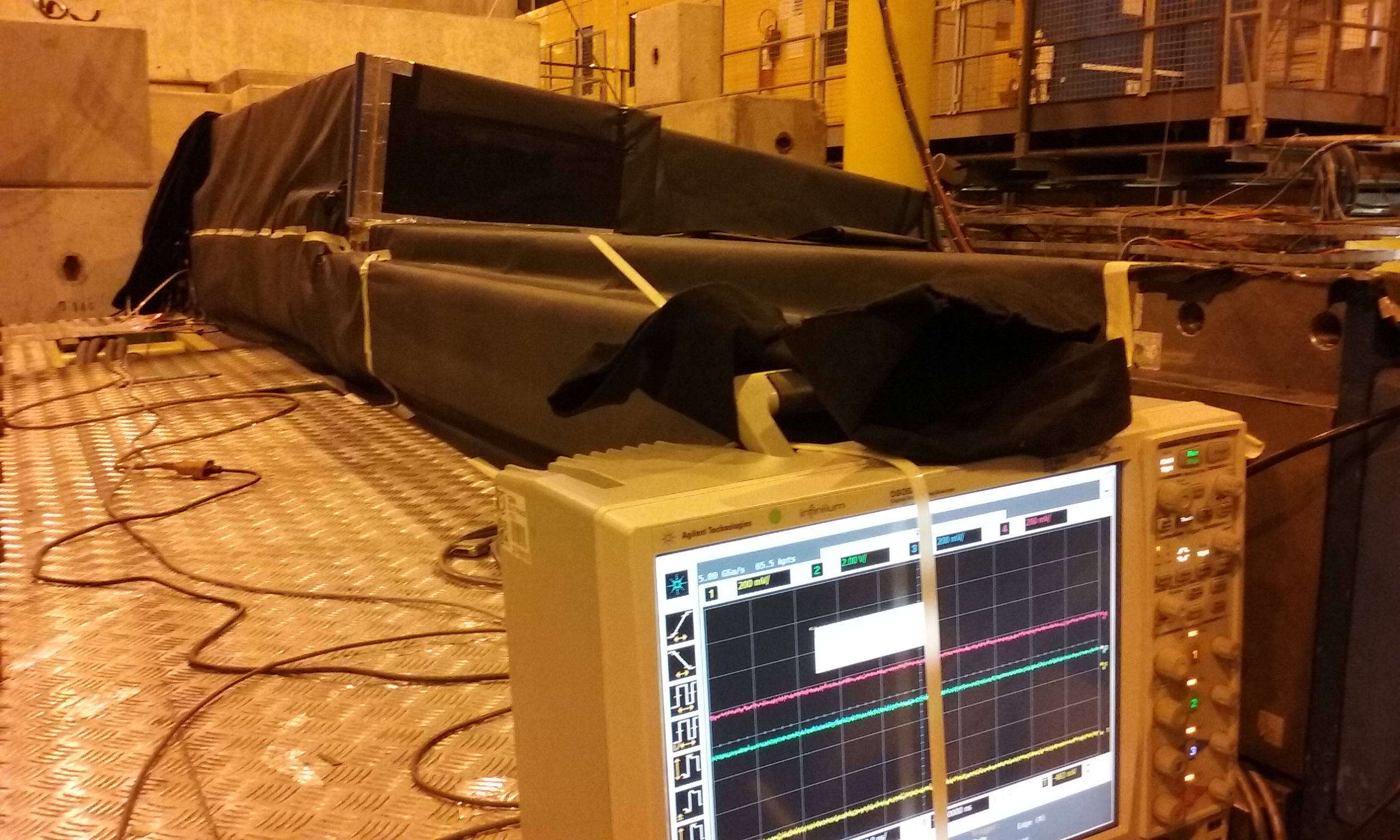

There is a prototype module at CERN which is used to test the whole system (low/high voltage power supplies, data acquisition system, noise of the complete electronic chain, etc ...). In addition, this demonstrator allows to compare the various options of readout electronics, which are designed by different labs. In practice, a lot of tests can be done without real particles, for example using electronic charge injection system (needed for in-situe calibration). The communication between the different components of the readout chain (very front-end, frond-end, back-end) are also studied on this demonstrator. However, it is crucial at some point to see how the demonstrator reacts to real particles and this is done using particle beam provided by the CERN facilities. The demonstrator is then measuring electrons, muons and pions with various incidence angles and electronics readout can be tested in real conditions.

Higgs boson physics

The Higgs boson was searched for many years before being discovered in 2012. The search strategy was quite wide in order to cover all possible experimental signatures of the Higgs boson. Indeed, its mass wasn't predicited and has a strong impact on the way the Higgs boson decays and thus on how the detector will "see" it. They are two types of decay mode which allow to access different properties of the Higgs sector:

- fermionic decay modes reflect the Yukawa coupling terms, not constrained by any invariance, like in this search.

- bosonic decay modes reflect the behaviour of the Higgs field under electro-weak symmetry, like in this search.

Search and evidence for Higgs boson decaying into tau leptons in ATLAS

Unlike couplings to electroweak bosons, couplings between the Higgs boson and fermions are not constrained by any symmetry. Thus, measuring the branching ratio of the Higgs boson decaying into a fermion pair is testing a part of the Standard Model, called Higgs Yukawa sector, which has no fundamental reason to be like we think it is. They are two fermions which provide a good opportunity to experimentally probe the Yukawa part of the Higgs sector: the b quarks and the tau lepton. Both benefits from a significant branching ratio but are extremely challenging from the experimental point of view.

If we focus on the tau-tau final state, there are three actual different final states seen by the detector depending on how each tau lepton will decay (more details here) , namely tauleptaulep (dilepton final state), tauleptauhad (lepton plus a hadronically decaying tau lepton) and tauhadhadlep (two hadronically decaying tau lepton). Since tau leptons decays before reaching the detecor, they have to be identified from their decay products. Hadronically decaying tau lepton are particularly challenging to identify among jets, largely produced in hadronic collider (for more details, see my researches on tau lepton identification). On top of the instrumental background due to the hadronic final states, there is an irreducible background where a Z boson decays into tau-tau having the exact same final state of the collision we are looking for.

I mostly participated to the tauleptauhad analysis exploiting a lot of properties to extract the signal from the fake tau lepton background and the Z boson production. For example, specifically looking a collisions where the (tau,tau) system is highly boosted allowed to significantly reduce the background while keeping a good fraction of the signal. Actually, one of the biggest achievement was to use a multivariate analysis to optimally exploit these numerous properties together and reach a good enough sensitivity to actually see the Higgs boson production in the tau-tau decay mode. At the end, the analysis was based on six different categories of events, classified depending on their topology. Each of them had a dedicated set of observables entering in the final MVA.

This channel is particularly relevant because it allows to access to one of the finger print of the Higgs boson. Indeed, one of the specificity of the Higgs boson is that his coupling to other particle follow a very simple law: it is proportional to the masse of the particle. The coupling between electroweak boson and the Higgs boson are well measured (Higg boson decay into two vector bosons - W, Z, photon - were actually the golden channel to discover the Higgs boson), but what's about the fermions? The coupling to the top quark is well known but only accessible indirectly. The direct observation of couplings between the Higgs boson and a fermion is provided by the tau-tau channel. And once again, the observation is in good agreement with prediction from the Standard Model. For more details, see ATLAS-CONF-2015-044.

Search for Higgs boson decaying into WW in the mu+tau final state at DØ

The Tevatron collider was mostly sensitive to the WW decay channel for a Higgs boson mass of mH=165 GeV. Indeed H almost always decays in this mode for mH ~ 2 mW. Experimentally, the e-e, e-mu and mu-mu final state are the easiest to identify and to reconstruct, in particular on a hadronic collider. However, before the LHC started, the Tevatron was the only collider able to constrain the Higgs sector and it was important to exploit every possible channels, including tau lepton channels.

The strategy was then to search for this Higgs boson in mu+tau events, coming from WW decay of the Higgs boson where one W decays into a muon and a neutrino and the other decays into a tau and a neutrino: $$ pp \to H \to W+W \to \mu\nu_{\mu} + \tau\nu_{\tau} $$ Since tau leptons decays before reaching the detecor, they have to be identified from their decay products. Hadronically decaying tau lepton are particularly challenging to identify among jets, largely produced in hadronic collider (for more details, see my researches on tau lepton identification). Therefore, the main background of this search is the production of W boson is association with jets, where the W boson decays into a muon and a jet is wrongly identified as hadronically decaying tau lepton.

Even more important than reducing the background, is how to accurately estimate it. Indeed, this part is crucial since we search for an excess of collision with respect to a predicted number of background collisions. The number of background events coming from W+jets strongly depends on how frequently a jet can look like tauhad, which is quite difficult to model using simulation. That is why a dedicated method based on data was elaborated in order to correct the simulation.

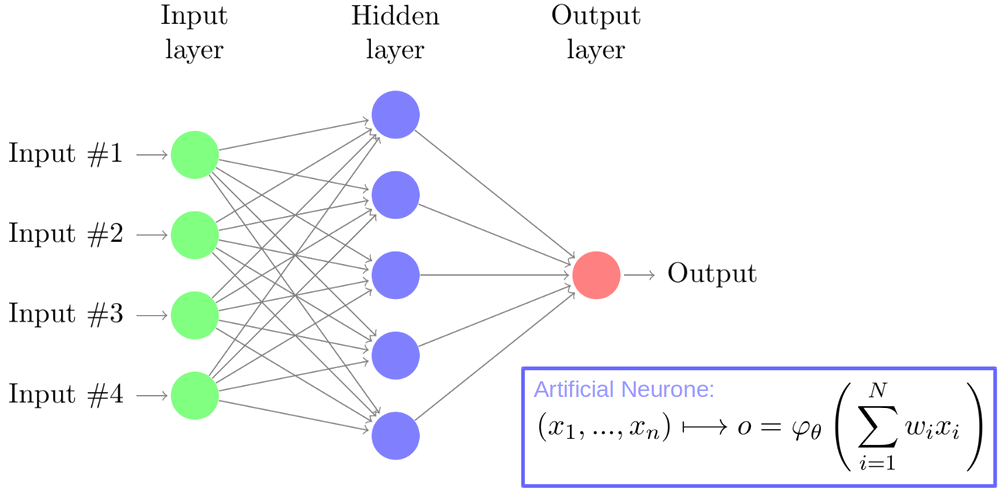

In order to separate collisions from background processes and collisions where H were produced, a multi-variate analysis (MVA) approach was adopted. Many observables allowing to help identifying signal collision were combined in a Neural Network (NN) in order to get an optimal discriminant exploiting all the physics behind the background and the Higgs boson production. Using this strategy, the experimental sensitvity to H production reached 8 times the SM predicted rate.

Tau lepton in hadrons collider

Why tau lepton are challenging?

The tau lepton is the heaviest known lepton and it is also the only one decaying after a very short distance (~100 micrometers). Thus, if one wants to probe particle decaying into tau leptons, one need to reconstruct them from they decay products. It also means that the reconstructed particles must be identified as a tau lepton decay, which might not always be trivial depending on the decay mode. They are two kinds of decay decay modes: 35% of leptonic decay (with one neutrino) and 65% of hadronic decay (with two neutrinos). The leptonic decay gives either an electron or a muon which are relatively easy to reconstruct (but impossible to distinguish from lepton originating from heavy boson). The hadronic decay mode is more tricky because it is subdivided in 3 main types of signature, depending on the hadrons produced in the decay. In addition, hadrons are heavily produced in hadronic collisions and will easily mimic tau leptons.

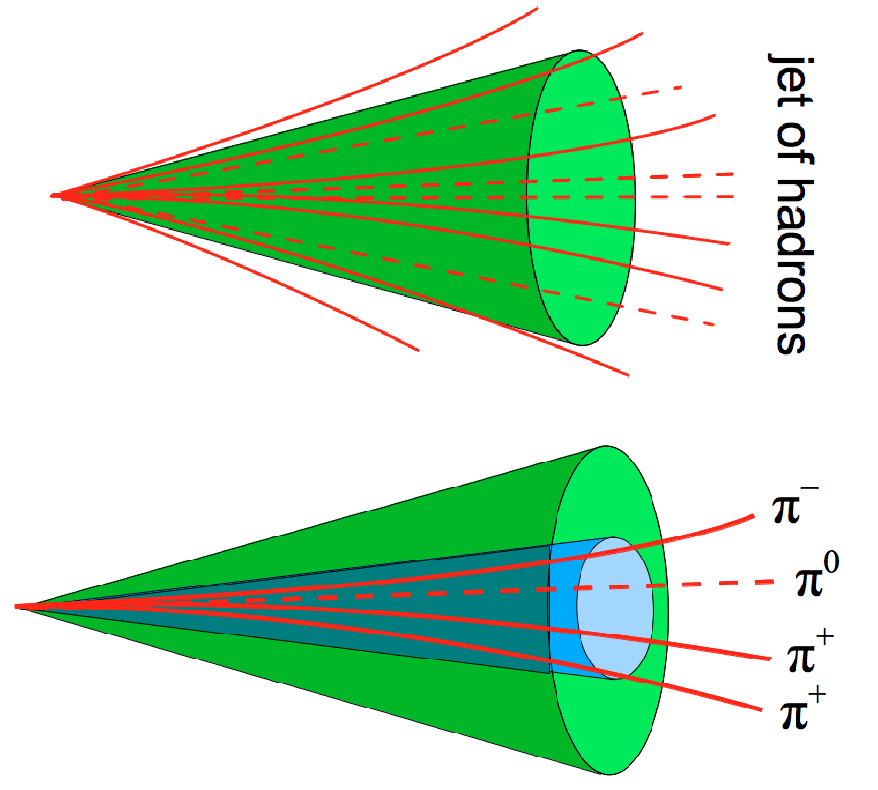

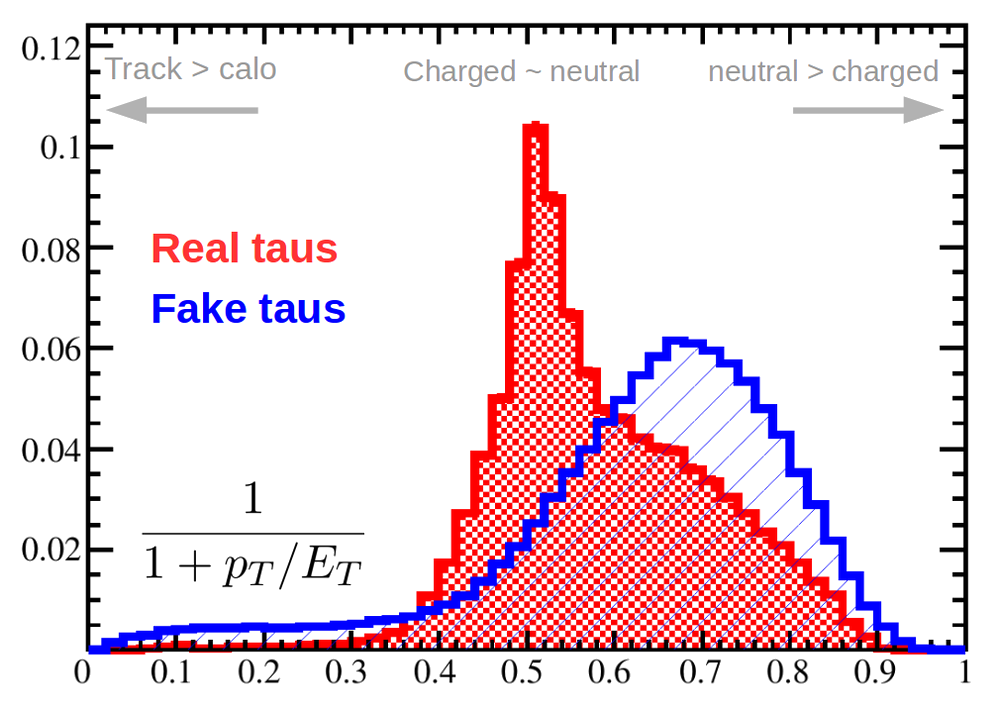

Since tau lepton mostly decay into hadrons, it is quite important to be able to identify them among all hadrons produced in a collision, in particular, those from jets - experimental signature of a quark. This is done exploiting specific properties of the hadrons coming from the tau decay. For example, hadrons from a tau will be very collimated and not very numerous (mostly 1, 2 or 3). Looking around the most energetic hadron will also provide a good insight whether this is a hadronic tau - low activity, few tracks and energy deposit - or a jet - high activity, many tracks and energy deposits. The fraction of charged hadrons and neutral hadrons will also be a nice handle to distinguish between hadronic taus and jets.

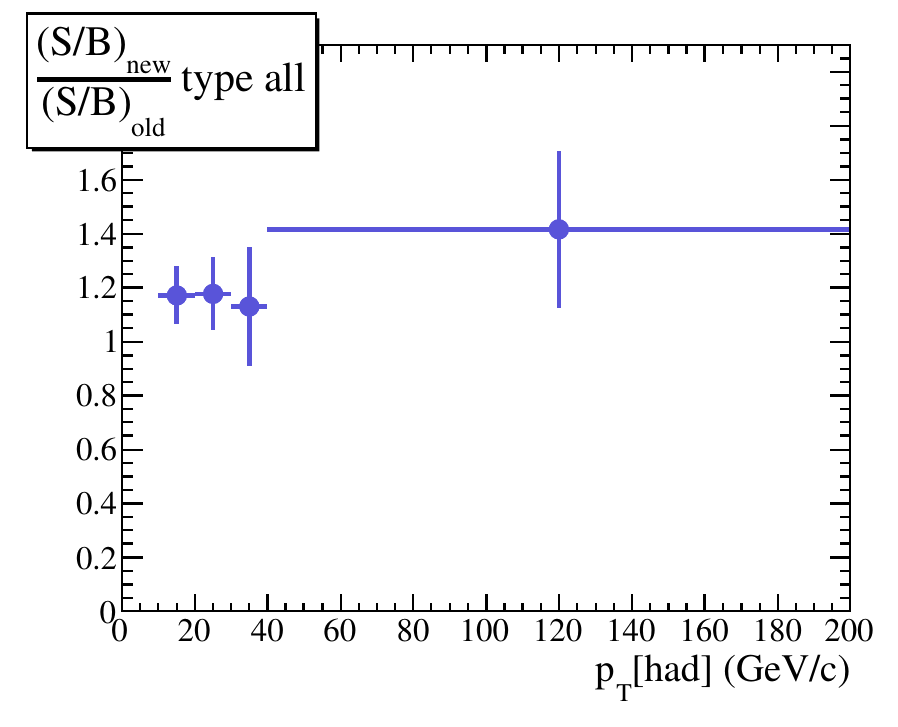

How to improve hadronic tau identification

I worked on several aspects to better identify hadronics taus. First, I tried to explore detailed properties of the hadronic decay. For example, in some cases a particular hadron is produced a ρ, decaying into a neutral and a charged pions. It means that the two final pions will have a particular correlation when they come from a tau, which won't be the case if they are randomely produced in a jet. At DØ, I developped a dedicated algorithm to reconstruct close-by photons coming from neutral pion decay, using scintillating strip detector in DØ. Several other ideas were tested in order better reject fake taus, based on physics but also on sophisticated data analysis like a neutral network. The result was a significant improvement of tau identification efficiency, 20% on signal (true taus) over background (jets) ratio.